The Four Corners of Lab Digitalization: Getting the Right Tools in Place

Biotech has long had a tech problem. We’ve said before that when it comes to modern data infrastructure and management, the life sciences are 10 to 20 years behind other industries.

Part of this is due to the very nature of the scientific process and the complexity of biotech/pharma data. Biology is, to put it lightly, complicated. Life science companies generate and collect data from a ton of different sources, including:

- Wet lab instruments and device interfaces

- In-human trials

- Manufacturing instruments and machines

- Public datasets

- Contract research organizations (CROs)/contract development and manufacturing organizations (CDMOs)

- Academic groups

- and more.

The key to bringing all this life science data into the 21st century is digitalization.

While that may seem like an overused word, we define it pretty simply: using modern applications like electronic lab notebooks (ELNs) or laboratory information management systems (LIMS) in a modern cloud-based setup or as full software as a service (SaaS) to do data-related tasks that would otherwise have been done manually. When a lab digitalizes it means they’re making the journey from paper, to paper on glass to, eventually, automation. (If you take a look at our recent Lab Digitalization Maturity Curve, you may be able to instantly recognize where your lab stands.)

The Four Corners of Lab Digitalization

Regardless of your company’s position regarding digitalization, every lab needs the right tech stack (or sandwich, as we like to say) to collect, store, manage, and analyze their data—and to make it FAIR. It takes a constellation of different software solutions to maximize the value of lab data.

We like to think of these tools in terms of a Four Corner model. This model includes two basic categories:

- Backend Solutions: The systems and infrastructure tools where logic and data is stored.

- Frontend Solutions: The software tools that scientists actually interact with on a daily basis.

Let’s dive into each of these categories a bit more.

Backend Solutions: Data and Logic/Connectivity

You can think of this category as the plumbing, HVAC, or electricity for data of your lab. This is the infrastructure that runs underneath everything, much like pipes and wires. Nothing else can happen with your data unless the backend infrastructure is well thought out and well maintained.

In short: backend solutions are the things that underpin all the fancy analysis tools and other software scientists use to do actual science.

In practicality, there are two “corners” within this category:

Data Backends

All that lab data needs to live somewhere. Data backends are where that data is stored, such as:

- Systems of record, like LIMS

- Raw data storage solutions, like a scientific data management system (SDMS), scientific data cloud (SDC), or a data lake

- Structured data storage options, like a data warehouse

What all these have in common is that they aim to gather every speck of data in the lab—and then keep it there, forever.

Logic/Connectivity Backends

This category is still infrastructural—AKA not scientist-facing—but is how the data actually gets into the storage places listed above. This could mean snippets of code that execute specific tasks, automatically ingest information into storage endpoints, etc.

Examples include:

- Bioinformatics or machine learning (ML) workflow tools

- Coding/scripting layers

- Fit-for-purpose logic, such as process analytical technology (PAT), imaging, etc.

- A lab instrumentation or app integration system

Each of these starts to introduce logic into how data flows out of instruments, machines, and other on-prem sources into the cloud.

Frontend Solutions: Data Inputs and Outputs

Frontend tools are the things scientists actually use and touch to enter and manage their data. These solutions fall into two more “corners” (bringing us to a total of four):

Data Inputs

These are places where scientists will themselves enter or work with data. Some examples of these tools include:

- Unstructured frontends, where data is added without too much structure or formality, such as an ELN

- Structured or form-based front ends that typically have more rigidity, such as a manufacturing execution system (MES), lab execution system (LES), or electronic batch records (EBRs) solution

When it comes to actually inputting data manually, automation—such as automatically uploading information from an instrument run to an ELN—will ideally replace much of the “busywork” for scientists. But by and large, they will continue to engage with data input tools daily as part of their routine work.

Data Outputs

Data outputs are the tools scientists use to take a closer look at their data and draw conclusions.

- General analysis tools, such as JMP, Prism, and Spotfire, to name a few

- File system and sharing apps

- Business intelligence tools

- Contextual analysis tools, like flow cytometry gating

This is where data gets fun for scientists—and where it starts to yield insights and results that turn into patents, papers, and eventually products.

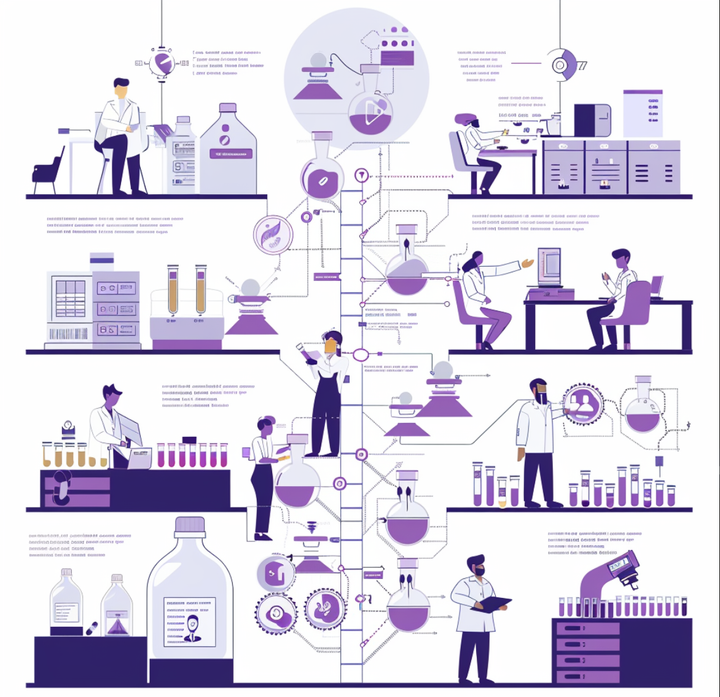

Cover Your Corners to Reach Lab Digitalization

Lab digitalization isn’t a one-stop shop. There’s no single software provider, tool, or widget that can do it all. Digitalizing a lab takes strategy, time, and the right stack of tools unique to your organization’s needs and workflows. Take a look at this graphic—as you can see, there are frontend and backend solutions at every level of a lab, from early R&D through manufacturing.

No matter how intricate your lab is, however, all organizations have the Four Corners in common. Everyone needs backend and frontend solutions to ensure data is FAIR, well-managed, secure, and actually usable. If you cover all four corners, your lab will be well on its way to digitalization—and the future of science.

Learn More About Wet Lab Digitalization